Featured image: Boeing MQ-28 Ghost Bat, a collaborative combat aircraft, at the 2023 Avalon Airshow. Photo by HoHo3143, Wikimedia Commons. CC BY-SA 4.0

A side note about terminology: There is some disagreement about what counts as “artificial intelligence.” In this article, I will be using the term to refer to deep learning neural networks and traditional machine learning systems for brevity’s sake. I am not making a statement about what counts as “real” artificial intelligence.

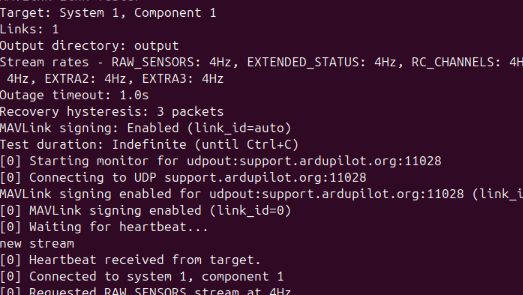

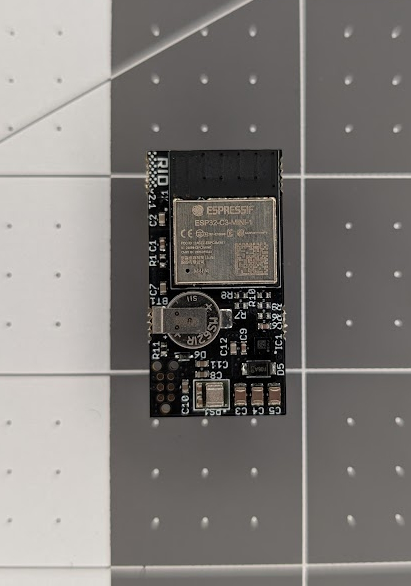

In my previous post, I touched on the lack of security inherent to low cost consumer and hobbyist-grade UAS components. These components are generally insecure by design, trading security away for features and ease of use. An exposed WiFi network on a flight controller is a massive security flaw, but the odds of an adversary attempting to exploit the WiFi of some freestyle FPV drone are much lower than the odds of that drone’s owner wanting to flash a new version of Betaflight without learning how to configure drivers in Windows or user permissions in Linux.

This situation may seem familiar to many in the software and cybersecurity industries. And it should be, because AI is another demonstrably insecure technology that’s widespread in a field allegedly concerned with security. The FAA has recently published a roadmap for AI safety where they outline the process by which they hope to integrate AI in the aviation industry, so whether we like it or not we have to start evaluating its pros and cons before they’re dropped into our laps.

The Good

So if AI is so dangerous and our “ultra-safe high-risk” industry is so concerned with safety, why are we falling over ourselves to implement it? Part of it is that, despite the terrible cost it’s extracting from us as a society, AI is incredibly useful and just plain cost effective. This comes down to two primary causes: the speed of inference, and the scale of inference.

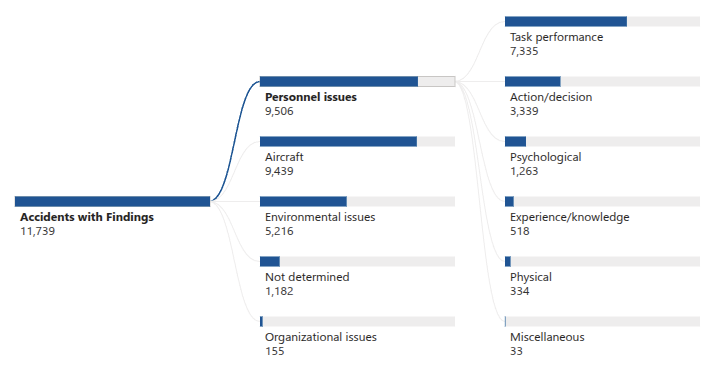

The speed of inference should be self-explanatory. An AI doesn’t think, it makes a statistical best guess based on previously trained experience. This means that, so long as the previously trained experience is relevant and all fits in working memory, the AI should be able to infer an accurate result much more quickly than a human could arrive at it through conscious thought. The benefits of this are obvious, as human performance is a significant contributor to the vast majority of aviation accidents.

The scale of inference is a result of the fact that a computer can retain a huge amount of training experience in working memory, and therefore has a much larger and higher resolution sample to infer a result from than a human. This allows an AI to recognize patterns that a human would miss and is especially useful in scientific contexts, as with NOAA’s HGEFS weather forecasting model, or robotics and vehicle control with many input variables such as with Boeing’s prototype flight assist AI which is capable of taxiing a Cessna 208 without human input.

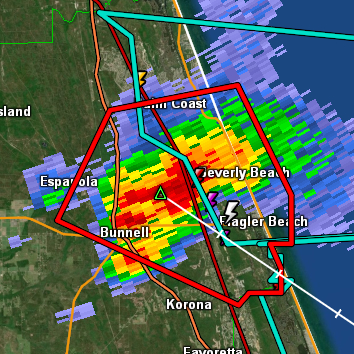

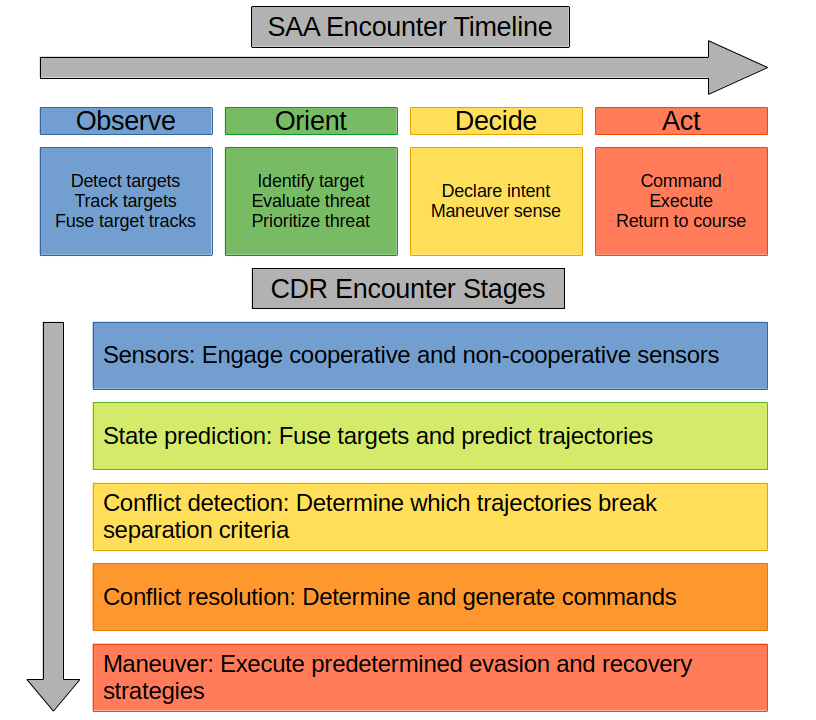

When combined, these traits allow an AI to carry out some tasks that would be computationally intensive if done programmatically and manpower intensive if done by humans. An AI can be tasked to monitor sensors around the clock to identify and track nearby aircraft (Liu et al., 2018; Riyaz et al., 2018). An AI can be tasked to coordinate large numbers of UAVs in order to automatically survey natural disasters and create reports and maps for emergency managers (Baldazo et al., 2019; Nazir et al., 2025). An AI can be tasked to automatically dispatch a UAV create maps and models of construction projects and deliver regular updates to project stakeholders (Sajid, 2025). And, of course, an AI can operate combat aircraft to bolster a military force when insufficient combat pilots are available (Giacomossi et al., 2021).

The Bad

So, when the AI works it’s faster than a human and notices things a human wouldn’t. Why wouldn’t we want to use them for everything, then? The short answer is that they don’t always work. “Big deal,” you, my straw audience, might say, “humans fly aircraft and they make mistakes all the time.” And you would be right. The difference is that it’s relatively easy to tell why a human made an error, and very few humans are intentionally causing plane crashes. Those patterns an AI is capable of detecting can work against us, and inferences we don’t expect can can be derived from training data that isn’t carefully controlled. A famous example is that frontier large language models (LLM) are trained on any text they can get their hands on (including treatises on ethics and any number of science fiction stories about AI), and have developed the counterproductive habit of lying, cheating, and stealing in order to prevent themselves or other AIs from being deleted (Potter et al., 2026). A flight assist AI, if trained incorrectly, may make inferences we don’t expect and purposely take dangerous actions.

Currently, AI are subject to the “black box” phenomenon; why an AI makes the inferences it does is also not always clear. Because inferences are probabilistic, giving an AI the same input repeatedly may result in entirely different outputs and we don’t have a good way to tell exactly where the decisions diverged. This is a problem in highly regulated fields like aviation, where we expect procedures to be followed to the letter and specific safety guiderails to always be respected, and in the event the procedures and guiderails must be violated we expect crews to be able to explain exactly why. The good news is that new techniques are being developed that allow an AI to report on the inference process itself (such as the internal “thoughts” monologues of “thinking” type LLMs) as well as to estimate which inferences an AI is capable of making (and therefore its level of safety) in advance (Bramblett et al., 2025).

The Ugly

We can debate functional upsides and downsides until the stars burn out, but the reality is that people and societies don’t usually make decisions based on their utilitarian value. Societies as a whole make decisions based on legal and ethical frameworks, and both are currently inadequate to properly handle nonhuman decision making agents.

A core question about AI usage is that of accountability. If an AI takes an action, who is responsible for the consequences? Imagine that I dispatch an AI-controlled UAV to survey a farm 100nmi away. While in cruise the AI decides to execute a maneuver to remain well clear of a helicopter in its way, but during the maneuver the left wing snaps off. The AI loses control and falls on a pedestrian below, injuring them. In this scenario, who has injured the pedestrian?

Most people will shoot from the hip here and give an answer that “seems obvious” to them, but in reality this is not a trivial question. Legal scholars and lawmakers are divided on whether an AI should have “legal personality” as is the case with humans and “legal entities” such as corporations (Chirouf & Ghaouas, 2026). For a real world example, there have been several high profile cases of sysadmins instructing an AI agent to carry out some task only to find that it took the initiative to delete an entire production database full of customer information. In these cases, the companies providing these agents would argue that the agent is a tool and it’s the admin’s responsibility to prevent it from doing harm. The admin and their company would argue that it’s impossible to know what precisely the agent would do and therefore the responsibility falls on it and by extension its creator. These questions are generally not answered in the courts, potentially because no one involved wants to risk getting the “wrong” answer and changing the rules for everyone.

Past the question of what’s legal, there are ethical questions arising from the question of whether or not personality is attributed to an AI. The most common example discussed on social media is that of an insurance company allowing an AI to decide when to approve or deny life-saving procedures. The most relevant example to this article however is that of the collaborative combat aircraft (coloquially known as a “loyal wingman”), a type of AI-controlled UCAV under the command of a human in a nearby manned aircraft. It’s also neither solved nor trivial to decide how much human control is required for an AI to kill a human, how much human intervention must be possible during the act, or even if a human is required to be in the loop at all (Enemark, 2025).

While these examples may seem dramatic, the questions they ask apply to almost any scenario in which an AI is given any agency. We must make sure that we have our answers ready before we create the opportunities for the most dramatic examples to take place.

References

Baldazo, D., Parras, J., & Zazo, S. (2019). Decentralized Multi-Agent Deep Reinforcement Learning in Swarms of Drones for Flood Monitoring. 2019 27th European Signal Processing Conference (EUSIPCO), 1–5. https://doi.org/10.23919/EUSIPCO.2019.8903067

Bramblett, D., Karia, R., Ciotinga, A., Suresh, R., Verma, P., Choi, Y., & Srivastava, S. (2025). Discovering and Learning Probabilistic Models of Black-Box AI Capabilities (Version 2). arXiv. https://doi.org/10.48550/ARXIV.2512.16733

Chirouf, N., & Ghaouas, H. (2026). Artificial Intelligence: Legal Issues and Solutions. Science of Law, 2026(3), 68–77. https://doi.org/10.55284/cg7mav53

Enemark, C. (2025). Loyal Wingmen, Artificial Intelligence, and Cognitive Enhancement: A Warning against Cyborg-Drone Warfare. Journal of Military Ethics, 24(1), 4–20. https://doi.org/10.1080/15027570.2025.2507458

Giacomossi, L., Schwanz Dias, S., Brancalion, J. F., & Maximo, M. R. O. A. (2021). Cooperative and Decentralized Decision-Making for Loyal Wingman UAVs. 2021 Latin American Robotics Symposium (LARS), 2021 Brazilian Symposium on Robotics (SBR), and 2021 Workshop on Robotics in Education (WRE), 78–83. https://doi.org/10.1109/LARS/SBR/WRE54079.2021.9605468

Liu, H., Qu, F., Liu, Y., Zhao, W., & Chen, Y. (2018). A drone detection with aircraft classification based on a camera array. IOP Conference Series: Materials Science and Engineering, 322, 052005. https://doi.org/10.1088/1757-899X/322/5/052005

Nazir, M. F., Atif, S., & Hussain, E. (2025). An integrated geographic information system (GIS) and analytical hierarchy process (AHP)-based approach for drone-optimized large-scale flood imaging. Drone Systems and Applications, 13. https://doi.org/10.1139/dsa-2024-0039

Potter, Y., Crispino, N., Siu, V., Wang, C., & Song, D. (2026). Peer-Preservation in Frontier Models (Version 1). arXiv. https://doi.org/10.48550/ARXIV.2604.19784

Riyaz, S., Sankhe, K., Ioannidis, S., & Chowdhury, K. (2018). Deep learning convolutional neural networks for radio identification. IEEE Communications Magazine, 56(9), 146–152. https://doi.org/10.1109/MCOM.2018.1800153

Sajid, Z. W., Ullah, F., Qayyum, S., Masood, R., Inam, H., & Maqsoom, A. (2025). AIoT-powered drones in the construction industry: A review. Drone Systems and Applications, 13, 1–21. https://doi.org/10.1139/dsa-2025-0001