Featured image: Simulation of Naples, Italy airspace in IVAO’s Aurora ATC simulator. Image by Giovanni Rizza, Wikimedia Commons. CC BY-SA 4.0.

A side note about terminology: “Detect and Avoid” (DAA) and “Sense and Avoid” (SAA) are commonly used to refer to the same process. I have elected to use “Detect and Avoid” to conform to the terminology used by the FAA in their proposed Part 108, which will contain most of the regulatory basis for beyond visual line of sight (BVLOS) DAA procedures. When discussing evasive maneuvers, I have elected to use the term “sense” or “maneuver sense” to refer to a selected maneuver and its direction as with a TCAS Resolution Advisory.

As we begin to rely more on more on large UAS platforms with hybrid electric powerplants and multiple hours of endurance, it becomes more and more difficult to carry out missions without going BVLOS. How then, when we don’t have visual contact with the UAV, do we make sure it isn’t abruptly filled with bloodlust and attempting to ram unsuspecting SR22s? That task falls to the Detect and Avoid (DAA) system.

Detect and Avoid: Primary Functions

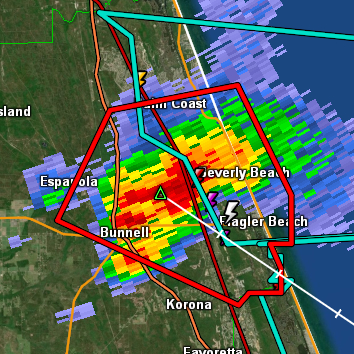

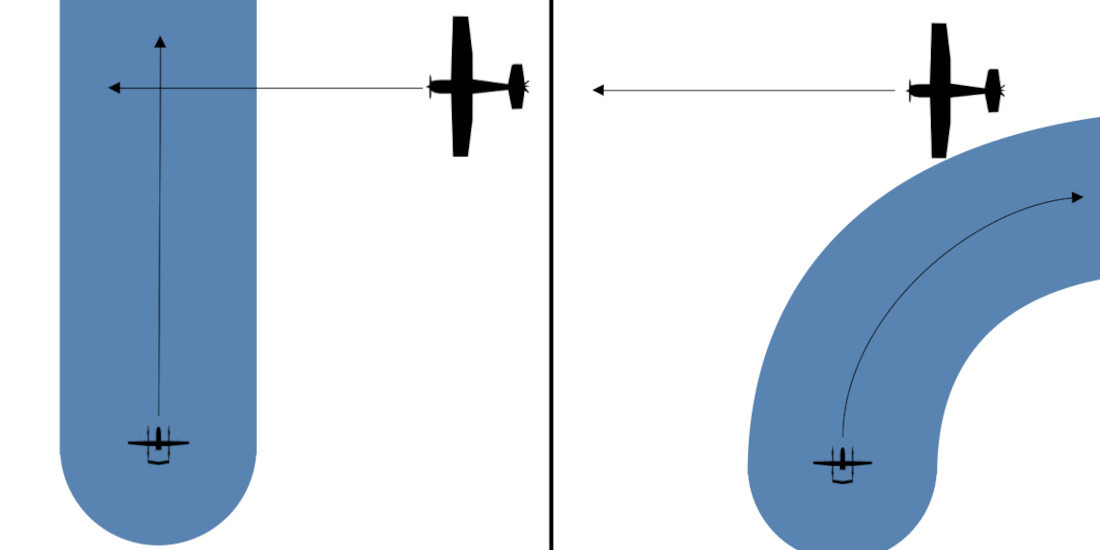

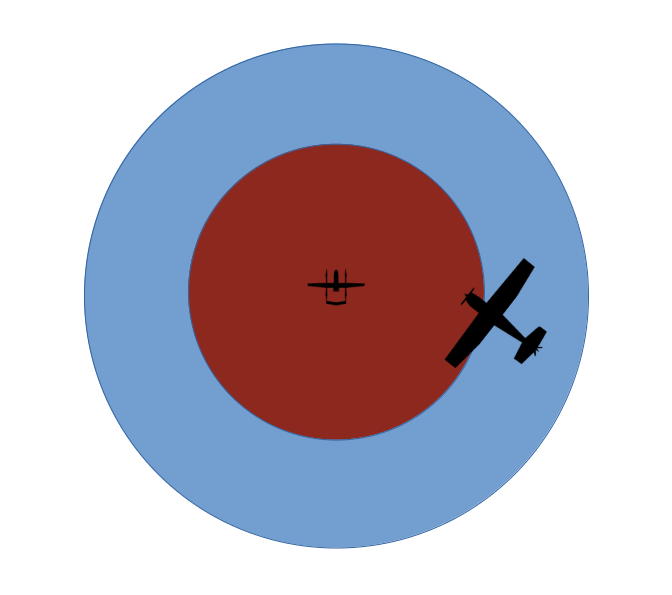

There are two primary functions of a DAA system. The first is to ensure that the UAV “remains well clear” (RWC) of other aircraft and, depending on UAS design, potentially wildlife or structures. This is similar to the Part 91 requirement for a manned aircraft to not pass over, under, or in front of another aircraft unless “well clear.” To determine if the UAV is “well clear” of other aircraft, the DAA system will create an imaginary RWC area around it and its course. If an object (or a track that object is following) enters the RWC area, the DAA system will determine how the UAV can maneuver to avoid it.

The second primary function of the DAA system is collision avoidance (CA). Within the RWC area is a second, smaller CA area. If an object enters this CA area, the DAA system will consider it a separation loss and assume the UAV is in immediate danger. During a CA situation, the DAA system will take more drastic measures to regain separation, possibly including unauthorized airspace incursions.

Detect and Avoid: By the Numbers

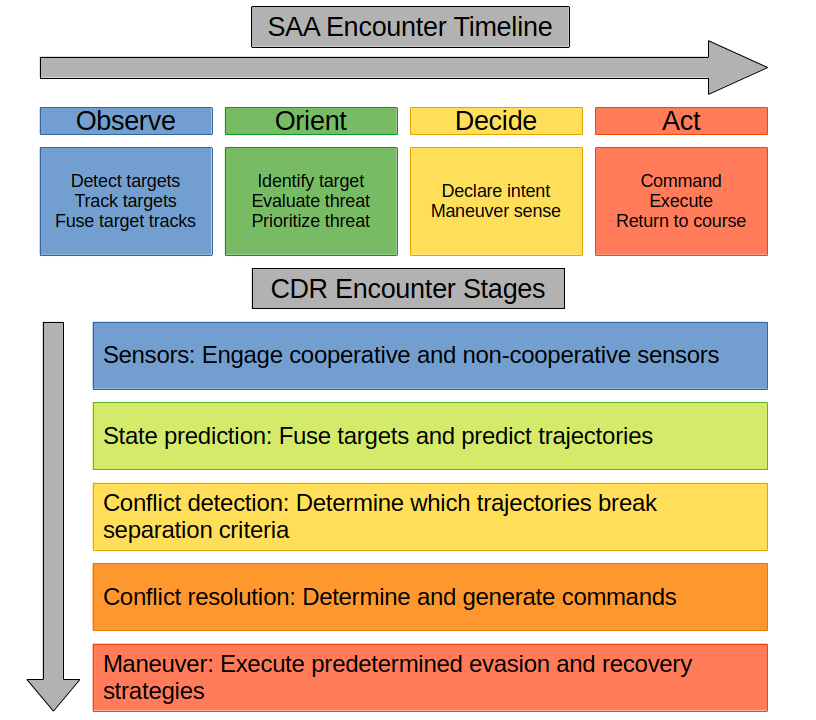

Everything discussed so far is relatively intuitive. If something is too close to us, or will be too close to us in the future, we get out of its way. Unfortunately, computers generally don’t have very good intuition, so we have to break the process down into specific tasks to be evaluated programmatically by different components of the UAS. We can use any number of DAA frameworks to keep the process human-readable, two of which are illustrated below.

For the remainder of this post I will be focused on the observe – orient – decide – act encounter timeline due to its higher granularity, but much of the information also applies to the CDR framework.

Observation

The first and most obvious set of tasks is to observe our surroundings. Observation is ideally carried out at all times, and the rest of our tasks are predicated on data collected during this step. There are three components to observation:

- Detect targets: Before we can do anything else, we must know that something is nearby.

- Track targets: Once a target is detected, we must build a track using repeated observations of its position and speed in order to predict where it will be in the future.

- Fuse target tracks: Ideally the UAS has multiple sensors with which to detect an object, but that means an object will generate multiple tracks. To get an accurate picture of our surroundings we must detect when multiple tracks are created by the same object and fuse them into one highly detailed target.

Sensors

Our UAS (hopefully) lacks eyes, so the process of observation is instead carried out by sensor systems, both onboard and remote. Sensors are broadly separated into cooperative and non-cooperative, then further into active and passive. Cooperative sensors receive information from sensors onboard the target itself, while non-cooperative sensors do not. Active sensors are emissive and must direct energy towards a target to detect it, while passive sensors only receive energy from the target and environment (Barnhart et al., 2021; Nichols et al., 2019).

Cooperative sensors available to us vary depending on the type of target we expect to detect. Manned aircraft are often equipped with transponders that can be interrogated and ADS-B equipment that we can receive automatic broadcasts from. A UAS operating under Part 107 can’t be equipped with either of those (14 CFR § 107.52 et seq.), but can instead be equipped with a Remote ID broadcast system which serves some of the same functions (14 CFR § 89).

At time of writing, a UAS operating BVLOS under the FAA’s proposed Part 108 would be required to yield right-of-way to “electronically conspicuous” aircraft (14 CFR Proposed § 108.195). This means that the UAS must have both the ability to detect Universal Access Transceivers (both ADS-B and handheld equivalents) and the ability to fuse tracks generated from them with those generated by its other sensors. A Part 108-compliant UAS must be able to communicate with an automated data service provider (ADSP) described in the proposed Part 146, which also acts as a type of cooperative pseudo-sensor (14 CFR Proposed §§ 108.190, 146).

Non-cooperative sensors available to us include passive optical and thermal sensors (cameras, if you will), laser-based active rangefinding systems such as lidar, radar, and active or passive acoustic sensors (Barnhart et al., 2021; Sabins & Ellis, 2020).

| Sensor | Energy Characteristics | Networking | Notes |

|---|---|---|---|

| VIS Cameras | Passive, visible light-based | Non-cooperative | Includes standard and low-light amplification cameras |

| IR Cameras | Passive, infrared light-based | Non-cooperative | Includes NIR/MWIR/LWIR, commonly implemented in FLIR systems |

| Laser | Active, UV or infrared-based | Non-cooperative | Includes LIDAR systems and traditional laser rangefinders |

| Radar | Active, RF-based | Non-cooperative | Includes onboard X-band radars, ground-based ASR, etc. |

| Acoustic | Active or passive, sound-based | Non-cooperative | Includes standard acoustic sensors and ultrasonic sensors |

| Transponder | Active, radio-based | Cooperative | Systems that must be interrogated e.g. Mode C/S |

| Transceivers | Passive, radio-based | Cooperative | Automatic transceivers e.g. UAT/ADS-B, Remote ID |

Orientation

Once we know that a target exists, it’s helpful to know what we’re dealing with. Orientation is the process through which we identify targets and determine what level of threat they pose. There are three components to orientation:

- Identify target: Before we can prioritize targets we must determine what characteristics they exhibit and potentially what they are.

- Evaluate threat: If we do nothing about this target, what will happen to us? Will we pass each other harmlessly, risk violating our RWC area, or risk a collision?

- Prioritize threat: Which of the targets we’re currently tracking are the most dangerous? Which can be safely ignored? More significant threats must be handled before less significant ones.

Target identification is important for deciding what level of threat the target poses and what type of evasion strategy will be used later. Information previously gathered by our sensors can be re-used by either traditional algorithms or machine learning models to determine what class of target is being tracked (Opromolla & Fasano, 2021; Said Hamed Alzadjali et al., 2024). For this purpose, target characteristics such as size, speed, emissions, presence of cooperative sensors, ADSP data, etc. can allow us to determine the target class (UAS, manned aircraft, bird, structure, terrain) with some degree of confidence (Barnhart et al., 2021).

Decision

Anyone familiar with TCAS is already familiar with the decision tasks, as TCAS carries out a similar process of declaring intent and selecting an evasive maneuver sense for manned aircraft. Now that we know that one or more threats are present and which are the most threatening, we can decide what to do about them. There are two components to the decision:

- Declare intent: The DAA system informs the pilot or flight controller that a course correction or evasive maneuver is needed.

- Maneuver sense: The DAA system determines the appropriate maneuver and sense to correct the problem and informs the pilot, flight controller, and/or ATC.

In order to make an appropriate decision, the DAA system requires information about the target, the UAV itself, and the airspace it’s operating in. The DAA system must decide how to avoid the target while staying within its allowed airspace if possible, avoiding crossing senses (e.g. climbing or descending across the target’s altitude) if possible, and complying with yielding requirements if possible. In some situations, the DAA system may also be required to coordinate with ATC before proceeding to the final tasks.

At time of writing, a UAS operating BVLOS under the proposed Part 108 has different maneuvering options depending on the airspace, separation, and whether or not the target is “electronically conspicuous.” Certain airspaces require the UAS to yield right-of-way to all manned aircraft, others only require it to yield to “electronically conspicuous” manned aircraft. At certain distances the UAS may only be allowed to pass behind the target, while at others the DAA may also be able to make a TCAS-style over/under sense decision (14 CFR Proposed § 108.195).

Action

Once we have a plan of attack, it’s time to act. There are three components to the action:

- Command: The pilot or flight controller commands the UAS to initiate the maneuver, either through manipulating the controls or by automated process.

- Execute: The UAV itself executes the maneuver within the specified window and the DAA system verifies its effect.

- Return to course: The UAS decides how to return to course its original course or become established on an amended course.

During these tasks, it’s critical that the DAA system continue to track all involved targets and re-evaluate the threats they pose. An unexpected maneuver from a target being avoided may require a different maneuver to counteract or may escalate a RWC to a CA. Similarly, a successful CA will likely provoke a follow-up RWC action, preventing the UAS from returning to course until it’s entirely clear of the target.

At time of writing, a UAS operating BVLOS under the proposed Part 108 must inform the FAA and all other airspace users of its successful deconfliction by way of ADSP (14 CFR Proposed § 108.190).

References

Barnhart, R. K., Marshall, D. M., & Shappee, E. (Eds). (2021). Introduction to unmanned aircraft systems (3rd ed). CRC Press.

Nichols, R., Mumm, H., Lonstein, W., Ryan, J., Carter, C., & Hood, J. P. (2019). Unmanned Aircraft Systems in the cyber domain: Protecting USA’s advanced air assets (2nd ed). New Prairie Press.

Federal Aviation Administration. (2025). Normalizing unmanned aircraft systems beyond visual line of sight operations, 14 CFR Proposed §§ 108, 146. https://www.federalregister.gov/documents/2025/08/07/2025-14992/normalizing-unmanned-aircraft-systems-beyond-visual-line-of-sight-operations

Opromolla, R., & Fasano, G. (2021). Visual-based obstacle detection and tracking, and conflict detection for small UAS sense and avoid. Aerospace Science and Technology, 119. https://doi.org/10.1016/j.ast.2021.107167

Remote Identification of Unmanned Aircraft, 14 CFR § 89. (2026). https://www.ecfr.gov/on/2026-03-10/title-14/chapter-I/subchapter-F/part-89

Sabins, F., & Ellis, J. (2020). Remote sensing: Principles, interpretation, and applications (4th ed). Waveland Press.

Said Hamed Alzadjali, N., Balasubaramainan, S., Savarimuthu, C., & Rances, E. (2024). A Deep Learning Framework for Real-Time Bird Detection and Its Implications for Reducing Bird Strike Incidents. Sensors, 24 (17). https://doi.org/10.3390/s24175455

Small Unmanned Aircraft Systems, 14 CFR § 107. (2026). https://www.ecfr.gov/on/2026-03-10/title-14/chapter-I/subchapter-F/part-107